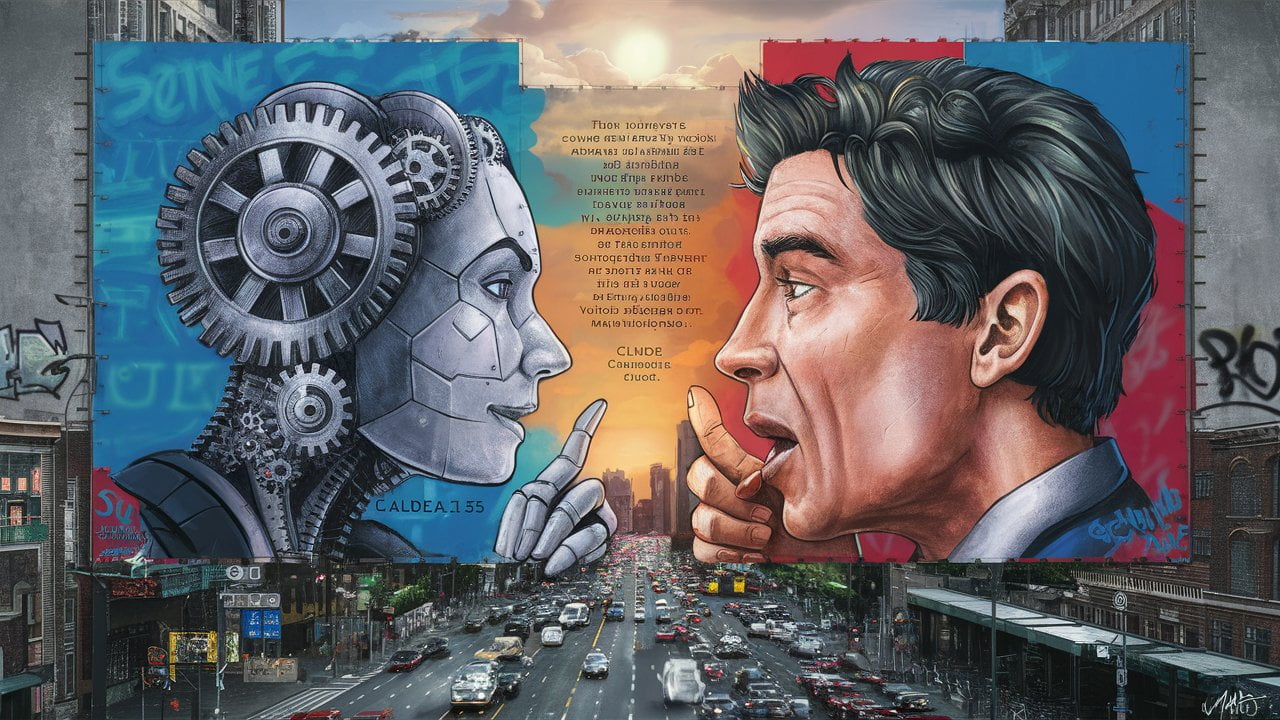

I opened Claude 3.5 Sonnet that day for more than a quick answer. I wanted to see what would happen if I treated the model less like a search box and more like an interlocutor. Not a person, obviously. Not an oracle either. Something stranger than both: a system that can be genuinely useful while still remaining partly opaque, occasionally slippery, and easy to overestimate.

That conversation stayed with me because it cut through a lot of the noise that usually surrounds AI. Once you move beyond the launch-day hype, the real questions are not about whether these systems are impressive. They clearly are. The real questions are about how to work with them without outsourcing your judgment.

What Makes AI Feel Different

I have lived through enough tech cycles to know that every era thinks its new tools are unprecedented. Usually that is only half true. With AI, though, there really is something unusual going on.

Most technologies become easier to understand as they become more useful. AI has taken a stranger path. Even the people building the most advanced systems still discover behaviors they did not explicitly script. Users, meanwhile, are trying to operationalize tools whose failure modes are not always obvious until they matter.

That is what makes this moment feel distinct to me. We are not just adopting a new tool. We are learning how to collaborate with a system whose capabilities are real, but whose internal reasoning is only partially legible.

The Black Box Problem Is Real, but So Is the Lazy Version of It

People love calling AI a black box, and I understand why. If a model produces a strong answer in one moment and a baffling one in the next, opacity is the first word that comes to mind.

But I have also started to think that “black box” can become a conversational shortcut that hides as much as it reveals.

Large language models are not unknowable in some mystical sense. We do understand a great deal about training processes, token prediction, context behavior, and architectural constraints. Researchers are steadily making progress in interpretability. The real issue is not total darkness. The issue is that our visibility is incomplete, uneven, and often insufficient for high-stakes use.

That distinction matters. If we tell ourselves the system is pure mystery, we excuse sloppy practice. If we pretend it is fully understandable, we become reckless. The more honest position sits in the middle: we know enough to use these tools productively, but not enough to use them casually everywhere.

Variability Is the Part People Underestimate

One theme that kept resurfacing in my exchange with Claude was output variability.

Ask the same system the same question twice and you may not get the same answer, the same emphasis, or even the same confidence profile. That is not a bug in the narrow sense. It is part of how these systems generate language. The stochastic element is also part of what makes them flexible and creative.

Still, variability becomes a real problem the moment somebody mistakes fluency for reliability.

In consulting, research, or humanitarian work, that distinction matters a great deal. A model that gives you three slightly different summaries might be fine for brainstorming. A model that gives you three slightly different interpretations of a policy document can create downstream confusion very quickly. That is one reason I have become much more deliberate about repeatability, logging, and evaluation. The conversational surface is smooth. The operational reality underneath it is not.

This is also where my broader unease about human-AI interaction shows up. We are remarkably willing to assign coherence, intent, and authority to anything that sounds articulate. I unpacked that tendency more directly in The ELIZA Effect and the Future of Human-AI Interaction, but the short version is simple: a persuasive tone can hide a fragile process.

The Working Rules I Keep Coming Back To

That conversation with Claude did not leave me with a neat doctrine. It left me with a set of practical habits.

- I try to write prompts as if clarity were part of the output quality, because it is.

- I verify important answers against external sources instead of rewarding the model merely for sounding plausible.

- I log workflows that matter, especially when the same task will be repeated by a team.

- I define what “good” looks like before I ask the model to produce anything ambitious.

- I assume human review is part of the system, not an optional cleanup step.

None of this is glamorous, but that is exactly the point. Serious AI usage looks less like magic and more like instrumentation.

Auditability Is Where the Real Work Begins

The deeper I go into practical AI usage, the more I think auditability is the dividing line between playful experimentation and responsible deployment.

If a model is helping shape recommendations, classifications, summaries, or decisions that affect real people, then “trust me, the model is usually good” is not a serious operating philosophy. You need trails. You need benchmarks. You need to know what changed when performance drifts. You need to understand which tasks deserve a simpler, more interpretable method instead of a more impressive one.

This is especially relevant in the kind of social-impact and policy-adjacent work I care about. Communities do not benefit from opaque automation simply because it is fashionable. They benefit when the system is well-bounded, well-documented, and used with enough humility to admit uncertainty.

My Real Takeaway

What stayed with me after that exchange was not awe. It was respect, mixed with caution.

Claude was useful. Often very useful. But the real value of the conversation came from how clearly it exposed the terms of engagement. AI is not something I want to worship or dismiss. I want to work with it in a way that is traceable, grounded, and honest about what it can and cannot do.

That, to me, is the mature posture. Curiosity without surrender. Optimism without naivete. Experimentation without pretending that fluency equals understanding.

We are still early in this era, which means good habits formed now will matter more than polished opinions. I am less interested in asking whether AI is amazing than in asking whether we are building the discipline required to use it well.

Recommended

Continue reading

Selected from shared topics, related tags, and the recent archive.