There is a particular kind of frustration I know very well by now.

You click a dashboard. You wait. You send a request to some polished service that promises intelligence, convenience, or control. The spinner turns for a little longer than it should. Then a little longer still. Maybe it resolves. Maybe it times out. Maybe it comes back fast today and sluggish tomorrow for reasons no marketing page will ever explain.

If you live close to the main arteries of the cloud, this is annoying.

If you live farther from the center, it starts to feel revealing.

That is one reason I have become increasingly suspicious of the way we talk about latency. In technical writing, latency is usually treated as a neutral engineering variable. A performance number. A systems constraint. Something to optimize.

All of that is true.

But from here, it can also feel political.

Convenience Is a Geography Problem in Disguise

The modern cloud likes to present itself as universal. Click the API. Open the console. Spin up the service. Route the traffic. Intelligence and infrastructure are available on demand, everywhere, for everyone.

That is the dream surface. The lived surface is messier.

When you are working from Jordan, or from many other places outside the main gravitational centers of the internet, you become very aware that some kinds of technical convenience were designed around somebody else’s default conditions. Better peering. Lower round-trip times. More local hosting options. Faster support assumptions. Fewer everyday penalties for depending on a service that lives somewhere far away, under someone else’s jurisdiction, with someone else’s priorities.

Again, this is not a complaint about physics. Distance exists. Packets take time. Infrastructure is uneven.

The political part is that we often pretend this unevenness is incidental when, in practice, it shapes who gets the smooth version of modern computing and who gets the workaround version.

Distance Changes How You Think About Dependencies

I notice this most clearly whenever a workflow depends on multiple remote services chained together.

One API talks to another. A dashboard depends on a provider. A generation request has to leave the machine, cross regions, hit a model endpoint, return through another service, and then render inside some product layer that wants me to feel like everything is immediate and frictionless.

It is easy to love that stack when it behaves.

It is harder to love it when every layer reminds you that your work is only as stable as several distant systems staying healthy at the same time.

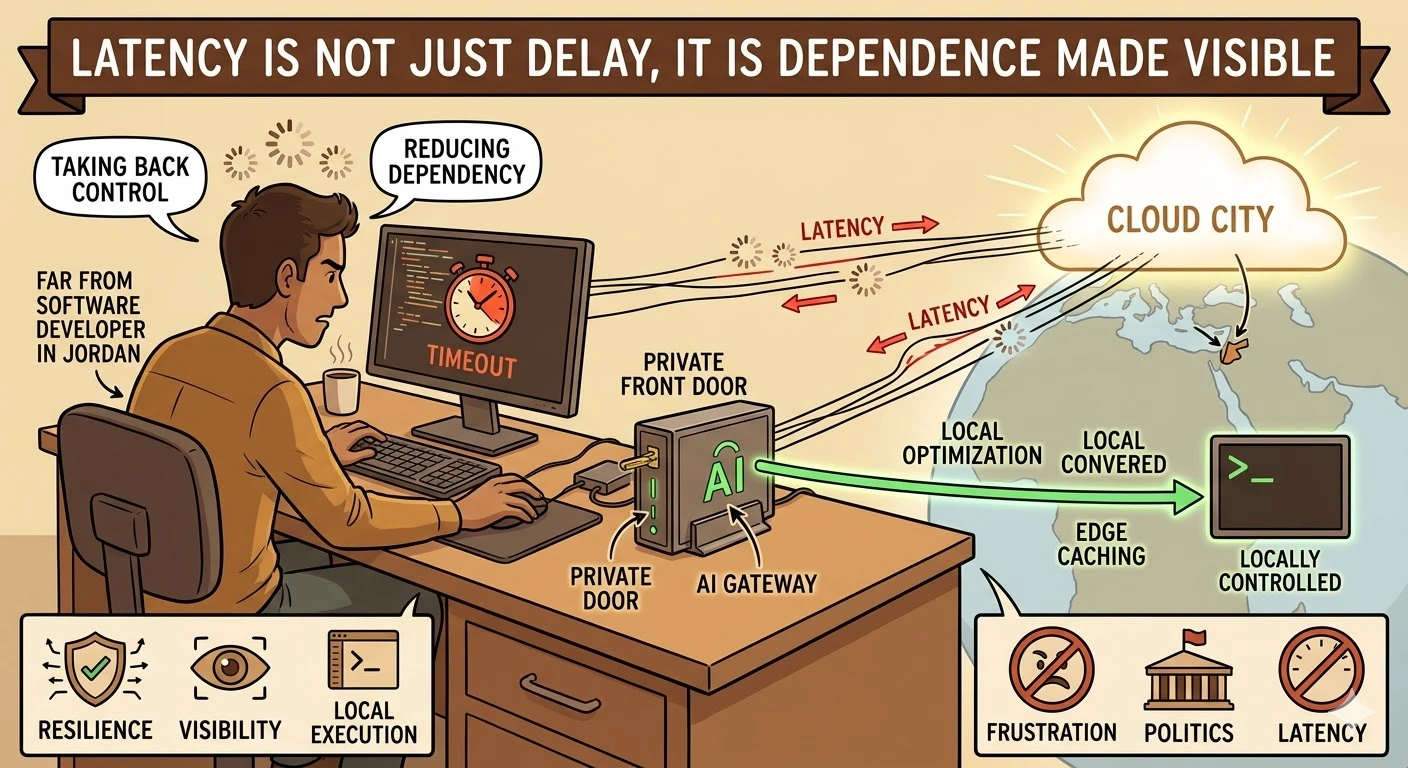

That feeling is part of what pushed me toward building my own intermediary layers. InAI Proxies, LLMs, Arabic Language Performance, and OBSBOT Tiny2 for Podcasting, I wrote about the practical appeal of putting a private front door in front of AI tools. The point was not only convenience. It was also visibility and control.

When Cloudflare later released their brilliant AI Gateway, it felt like a quiet validation of this exact mindset. My own homemade system—which, if I am being honest, was little more than a collection of routing scripts—became completely obsolete almost overnight. But it shared the same underlying philosophy. Cloudflare’s gateway does at a massive, global edge scale what I was trying to piece together locally. It acts as an intelligent intermediary between your application and distant AI models, providing an essential layer of caching, analytics, and fallback routing. By caching frequent responses closer to the user, it directly mitigates the latency penalty of endless round-trips to remote data centers. By allowing developers to gracefully route around degraded API endpoints or switch models on the fly, it treats provider instability as a structural reality rather than a temporary glitch.

Once you start doing that, you stop seeing the cloud as magic and start seeing it as dependency management.

That shift matters.

The Edge Makes You Respect the Local Machine Again

One of the strangest side effects of this era is that the more impressive cloud services become, the more affection I feel for anything I can run, inspect, cache, or control locally.

That does not mean I have become anti-cloud. I have not. Remote services are useful. Sometimes they are the only practical option.

But distance changes the emotional meaning of local capability.

Running something closer to the work is not only about speed. It is about dignity. It is about reducing the number of unseen hands between intention and execution. It is about being less exposed to the moods of dashboards, outages, pricing changes, policy shifts, and product managers on another continent who are not thinking about your use case at all.

That is part of why the open-weight AI movement feels important to me. I wrote about that more directly in The Open Source AI Rebellion: Echoes of the Early Linux Days. Open models are not merely cheaper alternatives. From this side of the map, they are also leverage. They reduce the amount of trust you are forced to outsource.

The same is true of self-hosted tools, tunnels, caches, and modest bits of infrastructure you control yourself. Even small local control changes the psychological shape of the work.

Latency Is Not Just Delay, It Is Dependence Made Visible

What latency really does, in my experience, is make dependence legible.

When the tool is fast, we tell ourselves the abstraction is working. When it is slow, the underlying arrangement starts to show through. You remember that this experience is not weightless. It sits on cables, regions, priorities, and business models.

That awareness changes how I evaluate software.

I care more now about graceful degradation. More about offline tolerance. More about whether a workflow can survive intermittent friction without collapsing into uselessness. More about whether a tool assumes constant high-quality access to distant infrastructure or respects the fact that the world is not built evenly.

This is where the language of resilience becomes more useful than the language of delight. Delight is easy to market from the center. Resilience becomes more valuable the farther you move from it.

What Good System Design Looks Like From Here

If I had to condense the lesson, it would be this: systems built for the edge should not be treated as degraded versions of systems built for the center.

They should be treated as serious design problems in their own right.

That means:

- fewer assumptions about permanent, low-latency connectivity;

- more respect for local execution and caching;

- better visibility into dependency chains;

- interfaces that communicate state honestly rather than hiding uncertainty behind endless polish; and

- a stronger sense that infrastructure choices are social choices, not just technical ones.

I do not need every product to be built around my exact circumstances. That is not realistic. But I do think too much of modern software still mistakes the most privileged network conditions for ordinary ones.

Why I Keep Thinking About This

The longer I work with modern systems, the less I believe that access is a binary condition. It is not simply that you either have the tool or you do not.

Often you have the tool, but through distance. Through delay. Through a stack that was not really imagined with your region in mind. Through a product whose smoothest version is reserved, quietly and structurally, for users standing nearer to the center of digital power.

That is why latency keeps feeling more meaningful to me than a benchmark chart can capture.

It is not only about speed.

It is about where you stand in relation to the infrastructure that increasingly shapes thought, work, speech, and daily life. And once you notice that, even a spinning indicator starts to say more than it used to.

Recommended

Continue reading

Selected from shared topics, related tags, and the recent archive.