I can still hear the screech of a 56k modem.

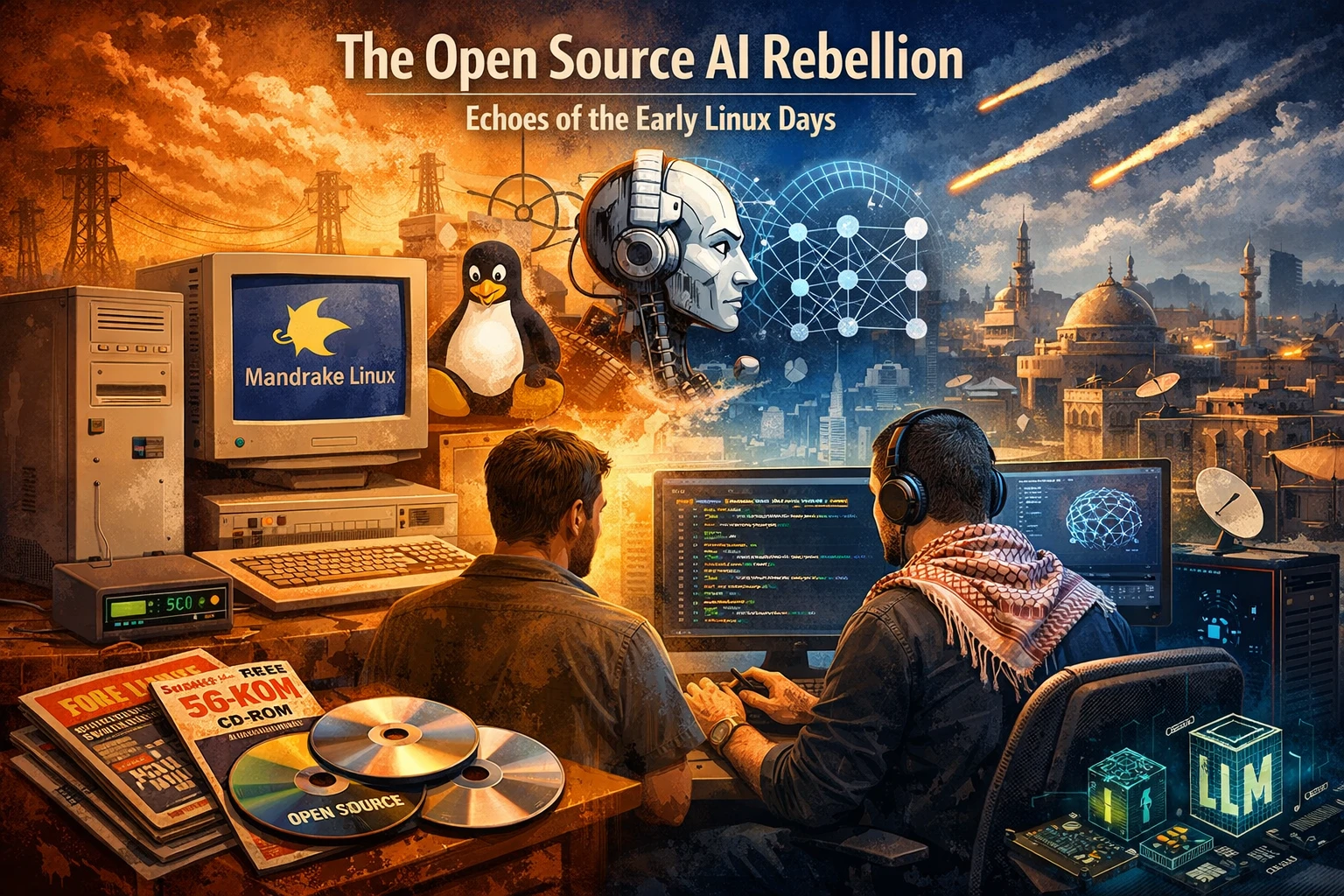

If you were around in the late 90s and early 2000s, you probably can too. Back then, in Jordan, my machine was a hulking beige Compaq tower and my operating system upgrades sometimes arrived glued to the cover of a tech magazine. One of those discs gave me my first taste of Mandrake Linux. It also gave me that distinct mixture of frustration and wonder that defined so much of early open-source life.

You would spend hours fighting dependencies, compiling kernels, and begging a sound card to produce something more dignified than silence. But when it finally worked, it felt earned. The machine was no longer a sealed appliance. It was yours.

That is exactly the feeling that came back to me recently while running a quantized local model on my desktop in Amman.

Outside, the region feels as unstable and unforgiving as ever. Inside, on my screen, a different battle is unfolding: one over whether AI becomes a public building material or a tightly metered service controlled by a handful of companies. And the more I watch this play out, the more I feel I have seen it before.

The Old War in New Clothes

In the Linux era, the divide was easy to recognize. You had the Cathedral: proprietary vendors, closed development, expensive licensing, carefully controlled access. And you had the Bazaar: messy, collaborative, opinionated, sometimes chaotic, but gloriously alive.

Today, the names have changed, but the pattern has not.

OpenAI, Anthropic, Google, and the rest of Big AI now play the role of the new Cathedrals. Their best systems are exposed through APIs, dashboards, and polished product layers that reveal just enough capability to keep you dependent, but not enough control to let you truly own the workflow. You can rent intelligence by the token, but you cannot meaningfully shape the underlying machinery.

Then there is the reopened Bazaar: open-weight models, community tooling, Hugging Face ecosystems, llama.cpp, quantization pipelines, local inference stacks, and thousands of tinkerers refusing to accept that advanced AI must remain a gated experience.

That builder energy feels deeply familiar to me. It is the same energy that made Linux more than an operating system. It made it a culture.

FUD Always Comes Back Wearing a Suit

The proprietary side rarely says, “We want control because control is profitable.” It usually says something much more respectable.

In the early Linux years, the line was that open source was chaotic, insecure, unserious, and dangerous for business. A lot of people repeated that line with a straight face. Some probably even believed it.

Now the same instinct has reappeared around open AI. We are told that open-weight models are simply too risky for ordinary people to access. That only large, well-capitalized institutions can be trusted to develop and distribute this technology safely. That regulation must arrive quickly, and naturally in ways that existing giants are best positioned to survive.

I am not dismissing safety concerns. AI absolutely creates real ones. But there is a major difference between responsible governance and regulatory capture.

When a company argues that only it should be allowed to hold the keys to powerful models, I hear an old song. It is the same Fear, Uncertainty, and Doubt that proprietary software vendors once used against open systems. And just like before, it confuses centralization with safety.

Security through obscurity did not save software. Broader scrutiny did. Open collaboration did. Reproducibility did. I suspect AI will learn the same lesson.

Why This Matters More From Here

From the Middle East, this debate feels less abstract than it does in many Western think pieces.

Linux mattered here because it lowered the price of participation. A developer in Amman, Cairo, or Beirut did not need permission from a giant vendor to learn, build, or deploy something serious. Open systems gave people in this region leverage.

Open AI raises the stakes even further because now the issue is not just cost. It is sovereignty.

When Arabic is treated as a secondary language, when regional context is flattened, and when critical tools depend on infrastructure controlled far away, you begin to see the problem clearly. I ran into part of that reality in my earlier experiments with AI proxies, Arabic prompts, and AI-assisted media workflows. If your only path to AI runs through somebody else’s API, then you inherit somebody else’s priorities, filters, and fragility.

That is a serious weakness in a region where infrastructure is not something you take for granted.

Local and open-weight models do not solve everything, but they change the power relationship. We can run them closer to the work. We can keep sensitive data local. We can adapt them to Arabic corpora, regional institutions, and use cases that will never top the roadmap of a Silicon Valley product team. Even when the cloud is available, there is strategic value in not being wholly dependent on it.

The Return of the Tinkerer

One reason this moment feels so alive is that it has pulled software back toward experimentation.

For a long stretch, modern development often felt like stitching together polished services owned by other people. Useful, yes. Efficient, often. But spiritually? A little sterile.

Open AI has brought back some of the older magic. Running local models, tuning prompts, testing quantization tradeoffs, building private routing layers, and squeezing surprising performance out of ordinary hardware all feel closer to the older hacker ethos I grew up with. It is not nostalgic in a shallow way. It is nostalgic because the builder is being invited back into the room.

What I Think Happens Next

Linux did not destroy every proprietary company. That was never the real outcome. What it did was far more consequential: it became infrastructure. Quietly, relentlessly, and at enormous scale.

I think open-source AI is heading in the same direction.

The biggest commercial models will remain important, especially for organizations chasing frontier-scale performance. But the broader foundation of the next era, the layer that developers, schools, NGOs, startups, and independent builders actually shape, will increasingly belong to the open ecosystem. That is where adaptation happens fastest. That is where local needs get served. That is where resilience comes from.

There is a strange comfort in realizing this while sitting under an uncertain sky. The world outside can feel closed, brittle, and beyond your control. But on the screen, the Bazaar is still open.

And if history rhymes the way I think it does, that matters more than many people realize.

Recommended

Continue reading

Selected from shared topics, related tags, and the recent archive.