The first time I put Jules in front of a real codebase, I expected one of two outcomes: either a flashy demo that fell apart under pressure, or a productivity boost wrapped in so much cleanup that the gain would evaporate.

What I got was more interesting than either.

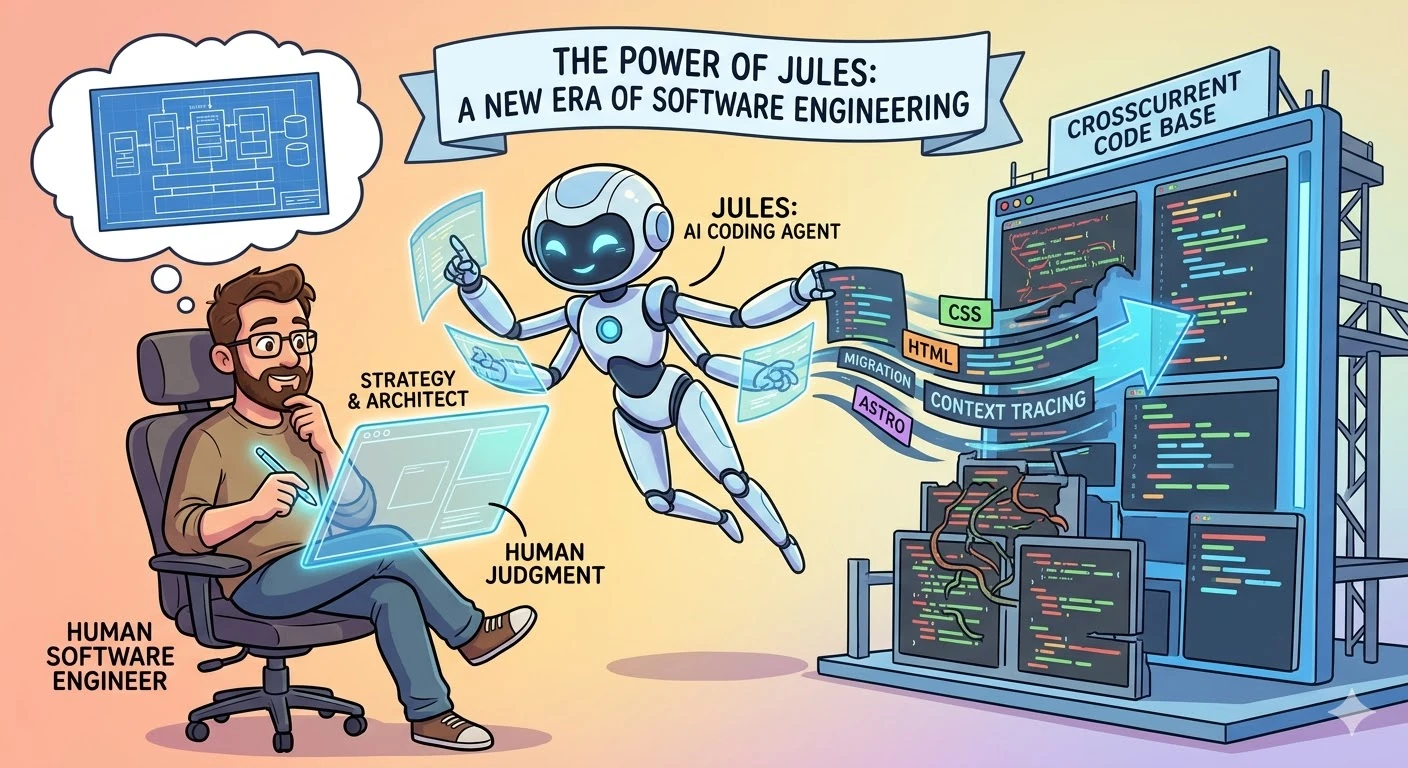

Jules did not feel like magic, and that is precisely why I took it seriously. It felt like the early shape of a new kind of collaborator: fast, tireless, surprisingly capable in the middle layers of implementation, and still in need of direction, review, and constraints from a human who understands the broader system.

The First Real Test Was a Living Codebase

One of my clearest experiments was this very site.

Crosscurrent Code did not begin as an Astro project. It came from an earlier shape, with older assumptions and a different stack behind it. Watching Jules work through parts of that transition gave me a more grounded sense of what AI coding agents are actually good at. They are good at reading across a lot of context very quickly. They are good at tracing dependencies. They are good at turning a broad request into a sequence of concrete implementation steps.

That matters more than people sometimes realize.

Most software work is not the dramatic invention of a brilliant algorithm from scratch. A lot of it is translation. Read the existing system. Understand how the parts fit together. Find the safe place to intervene. Make the change. Validate the result. Repeat. Jules is unusually well-suited to that middle zone.

What Impressed Me Was Not Just Speed

Yes, speed is part of the appeal. Anyone who has watched an agent read files, trace references, edit code, and run validation in a tight loop knows the throughput can be impressive.

But raw speed is not the most important thing here.

What impressed me more was persistence across context. Jules could keep moving through the boring parts that often slow humans down: searching through files, comparing patterns, carrying a plan across multiple edits, and staying patient with repetitive implementation detail. Human engineers get tired, distracted, or simply bored. Agents do not experience that friction in the same way.

That does not make them better engineers. It makes them valuable in a different way.

Fluency Is Not Judgment

This is where the conversation needs a little discipline.

It is very easy to watch a coding agent move briskly through a task and start projecting more certainty onto it than the situation deserves. I wrote about the broader version of that problem in Decoding the AI Black Box: My Enlightening Dialogue with Claude 3.5 Sonnet. The short version applies here too: fluent output is not the same thing as trustworthy understanding.

Jules can be remarkably useful, but usefulness does not remove the need for human judgment. Someone still has to decide whether the requested change is strategically sound. Someone still has to catch subtle regressions. Someone still has to notice when the agent is confidently heading in the wrong direction because the prompt, the constraints, or the architecture were underspecified.

That human layer is not a ceremonial approval step. It is the difference between collaboration and delegation without accountability.

What Changes About the Job

The more I use tools like Jules, the less I think the future of software engineering is about replacement and the more I think it is about elevation.

If an agent can absorb more of the mechanical work, then the human role shifts upward. Architecture matters more. Taste matters more. Product judgment matters more. The ability to define constraints clearly becomes more valuable. Review quality becomes more valuable. Knowing what not to build becomes more valuable.

In other words, the craft does not disappear. It gets redistributed.

This may be uncomfortable for engineers who built their identity around being the fastest person to write boilerplate or chase down repetitive implementation tasks. But it is also liberating. A lot of the work that made software engineering feel procedural and exhausting can now be compressed, which leaves more room for the parts that actually require discernment.

Why I Think Jules Matters

What convinced me was not the marketing language around AI software engineering. It was the lived experience of using the tool on real tasks and seeing where it genuinely reduced friction.

Jules will not replace the need for experienced engineers. If anything, it sharpens the need for them. But it does change the texture of the work. It reduces the distance between intent and execution. It makes it easier to try things, validate them, and iterate without burning so much human attention on the plumbing.

That is not a minor shift. That is a structural one.

I do not think the future belongs to engineers who reject these tools out of pride, nor to teams that trust them blindly because the demos are slick. It belongs to people who learn how to collaborate with them properly.

That, to me, is the real power of Jules.

Recommended

Continue reading

Selected from shared topics, related tags, and the recent archive.