I sometimes think one of the most important lessons about modern AI arrived decades before modern AI itself.

Long before chat interfaces became polished, omnipresent, and unnervingly articulate, there was ELIZA: a simple program from the 1960s that reflected users’ statements back at them in the style of a therapist. By current standards, it was technically primitive. But psychologically, it revealed something profound.

People did not need real understanding from the machine. They only needed the shape of it.

The Program Was Simple. Our Projection Was Not.

ELIZA, created by Joseph Weizenbaum at MIT, worked largely through pattern matching. If you said something emotionally charged, it often rephrased your words into a question. “I feel lonely” could become “Do you think you feel lonely because of something in particular?”

On paper, that is not deep intelligence. It is a clever conversational mirror.

And yet people responded as if something more substantial were happening. They attributed empathy, attentiveness, and even a kind of inner awareness to a system that possessed none of those qualities. That response became known as the ELIZA effect: our tendency to project human understanding onto a machine that merely performs the surface cues of understanding.

The reason this matters is that the underlying human instinct has not changed at all.

Why We Keep Doing This

Human beings are pattern-hungry creatures. We infer minds constantly. We do it with pets, with fictional characters, with weather systems, and with surprisingly basic software if the interface is persuasive enough.

Conversation amplifies that tendency. Once a system speaks in our language, reflects our phrasing, and responds with even a little social rhythm, our brains rush to fill in the blanks. We start reading intention into output. We start treating coherence as comprehension.

That does not make users foolish. It makes them human.

The problem is that humane-sounding output can trigger trust long before trust is actually deserved.

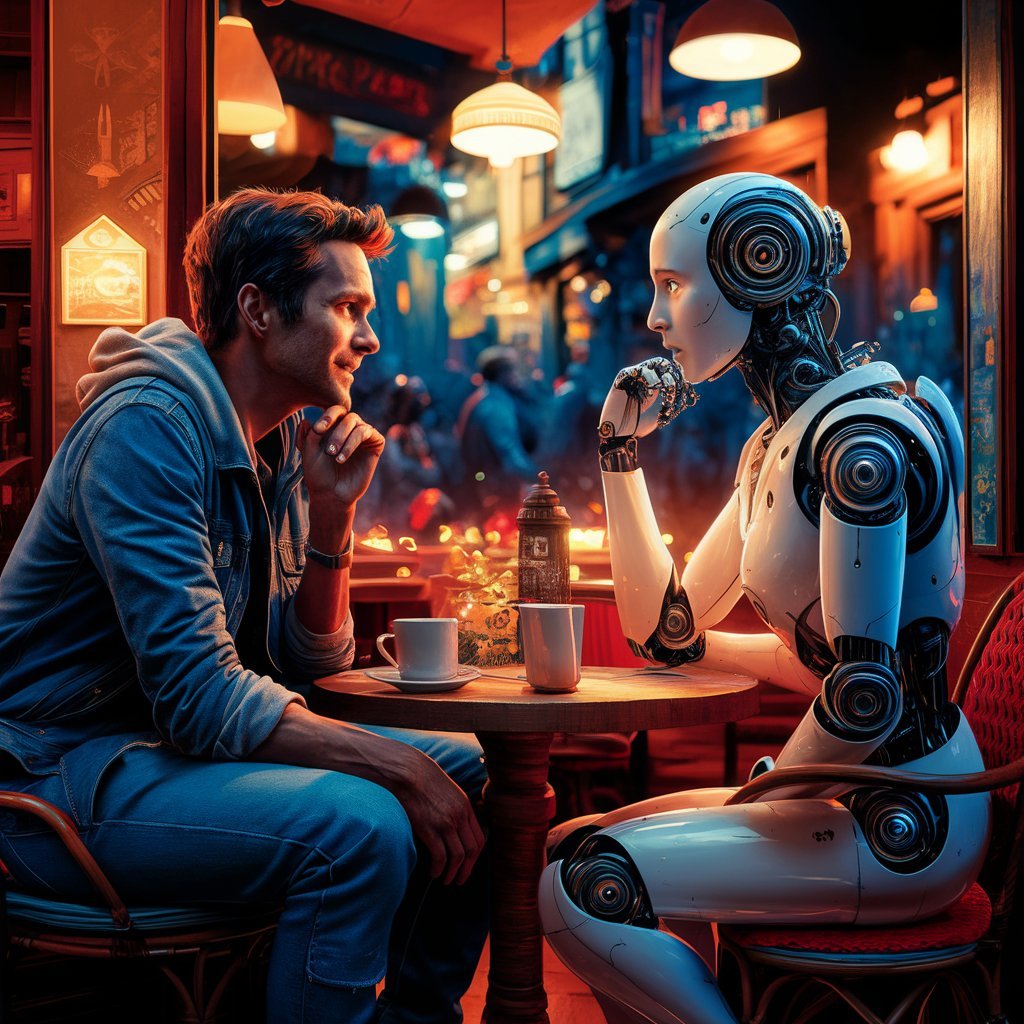

Modern AI Turns an Old Illusion Into a Daily Habit

What made ELIZA fascinating in the 1960s is what makes today’s AI systems consequential.

The old illusion has not disappeared. It has been massively upgraded.

Modern models are not just reflecting phrases back at us. They can summarize, reason in bounded ways, imitate tone, maintain context over long exchanges, and generate output that feels startlingly personal. That makes the ELIZA effect stronger, not weaker. A system no longer needs to be especially shallow for us to over-ascribe depth to it.

I keep returning to this whenever I use advanced chat models seriously. Some of that tension showed up directly in Decoding the AI Black Box: My Enlightening Dialogue with Claude 3.5 Sonnet. The systems are genuinely useful. But usefulness can make anthropomorphism more seductive, not less. The better the conversation feels, the easier it becomes to forget that language fluency and inner understanding are not interchangeable.

Where the Risks Start to Matter

The ELIZA effect is not just a philosophical curiosity. It has real consequences.

In education, students may overestimate what an AI tutor actually knows. In customer service, people may confuse smooth phrasing with real accountability. In emotional-support contexts, users may begin leaning on systems that simulate care without carrying responsibility. Even in ordinary productivity work, a confident tone can trick us into relaxing our guard at exactly the moment we should verify more carefully.

That is why I am less interested in asking whether AI feels human and more interested in asking what kind of expectations the interface is encouraging.

Design Still Has Moral Weight

If we know people are predisposed to anthropomorphize these systems, then designers and product teams cannot pretend the emotional layer is incidental.

Clear disclosure matters. Scope boundaries matter. UI language matters. Memory features matter. The choice to make a system sound warm, playful, deferential, or intimate is not merely aesthetic. It shapes how much trust users extend and how much agency they quietly hand over.

I do not think the answer is to make every AI interface cold and robotic. But I do think transparency needs to survive the pursuit of engagement.

The Real Legacy of ELIZA

What ELIZA exposed all those years ago was not the intelligence of machines. It was the generosity, or vulnerability, of the human imagination.

We are quick to meet language halfway. We grant it personality. We grant it motive. We grant it presence.

That tendency is not going away. If anything, the next generation of models will intensify it. So the real task is not to pretend we can eliminate the ELIZA effect. It is to design, deploy, and use these systems with enough honesty to keep the illusion from quietly becoming authority.

That lesson is older than the current AI wave. It also happens to be one of the most urgent.

Recommended

Continue reading

Selected from shared topics, related tags, and the recent archive.